MLflow: AI Engineering Platform for LLMs and Agents

MLflow is the largest open source AI engineering platform for agents and LLMs. MLflow enables teams of all sizes to debug, evaluate, monitor, and optimize production-quality AI applications, while controlling costs and managing access to models and data. With over 30 million monthly downloads, thousands of organizations rely on MLflow each day to ship AI to production with confidence.

MLflow's comprehensive feature set for agents and LLM applications includes production-grade observability, evaluation, prompt management, an AI Gateway for managing costs and model access, and much more.

Open Source

Join thousands of teams building agents and LLM applications with MLflow - with 20K+ GitHub Stars and 50M+ monthly downloads. As part of the Linux Foundation, MLflow ensures your AI infrastructure remains open and vendor-neutral.

OpenTelemetry

MLflow Tracing is fully compatible with OpenTelemetry, making it free from vendor lock-in and easy to integrate with your existing observability stack.

All-in-one Platform

Manage the complete AI development journey from prototype to production. Track prompts, evaluate quality, deploy AI agents, and monitor performance in one platform.

Complete Observability

See inside every AI decision with comprehensive tracing that captures prompts, retrievals, tool calls, and LLM responses. Debug complex workflows with confidence.

Evaluation & Monitoring

Stop manual testing with LLM judges and custom metrics. Systematically evaluate every change to ensure consistent improvements in your AI applications.

Framework Integration

Use any agent framework or LLM provider. With 100+ integrations and extensible APIs, MLflow adapts to your tech stack, not the other way around.

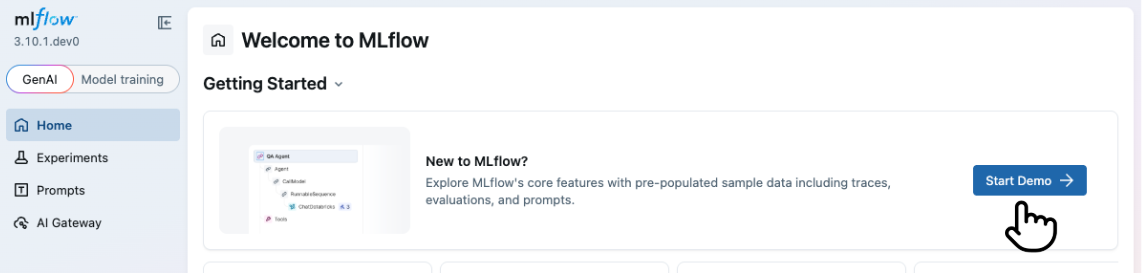

Try the MLflow LLMs and Agents Demo

The quickest way to learn about MLflow for LLMs and AI Agents is to try the demo. Click to launch the demo ↓

Observability

Debug and iterate on agents and LLM applications using MLflow's tracing, which captures your app's entire execution, including prompts, retrievals and tool calls. MLflow's open-source, OpenTelemetry-compatible tracing SDK helps avoid vendor lock-in.

Evaluations

Accurately measure free-form language with LLM judges by utilizing LLM-as-a-judge metrics, mimicking human expertise, to assess and enhance agent quality. Access pre-built judges for common metrics like hallucination or relevance, or develop custom judges tailored to your business needs and expert insights.

Prompt Management & Optimization

Version, compare, iterate on, and discover prompt templates directly through the MLflow UI. Reuse prompts across multiple versions of your agent or application code, and view rich lineage identifying which versions are using each prompt. Accelerate development with automated prompt optimization that uses data-driven algorithms to improve your prompts without manual trial-and-error.

Running Anywhere

MLflow can be used in a variety of environments, including your local environment, on-premises clusters, cloud platforms, and managed services. Being an open-source platform, MLflow is vendor-neutral; whether you're building AI agents, LLM applications, or ML models, you have access to MLflow's core capabilities — tracing, evaluation, experiment tracking, deployment, and more.

Ask AI About MLflow

Community

Connect with fellow builders, ask questions, and stay up to date — join our vibrant MLflow community on Slack, GitHub, LinkedIn, and more!

Learn how to get involved and discover all our channels on the Community Page.