Claude Code + MLflow AI Gateway

Route Claude Code through the MLflow AI Gateway to get centralized tracing and observability, while each developer authenticates with their own Anthropic subscription.

Prerequisites

- MLflow server running with a SQL backend (

mlflow server --port 5000) - Claude Code installed (

npm install -g @anthropic-ai/claude-code)

Step 1: Create an Anthropic Endpoint

Navigate to the AI Gateway tab at http://localhost:5000/#/gateway and click Create Endpoint.

- Provider: Anthropic

- Model:

claude-opus-4-6(or your preferred model) - Endpoint name: choose a name, e.g.

my-claude-endpoint - LLM Connection: select an existing connection or create a new one (see Create an LLM Connection)

The server-side API key in the LLM Connection can be set to a dummy value (e.g. dummy). The gateway detects Claude Code's User-Agent and forwards the client's own credentials, either their Anthropic subscription or their own API key, to the upstream provider instead.

Step 2: Configure Environment Variables

Set the following environment variables so Claude Code routes through the gateway and uses your endpoint:

export ANTHROPIC_BASE_URL="http://localhost:5000/gateway/anthropic/v1"

export ANTHROPIC_MODEL="my-claude-endpoint" # your endpoint name from Step 1

Alternatively, you can pass the model on the command line instead of setting ANTHROPIC_MODEL:

claude --model my-claude-endpoint

Step 3: Run Claude Code

claude

Claude Code authenticates using your existing Anthropic credentials (stored in ~/.claude) and all requests are proxied through the gateway.

What You Get

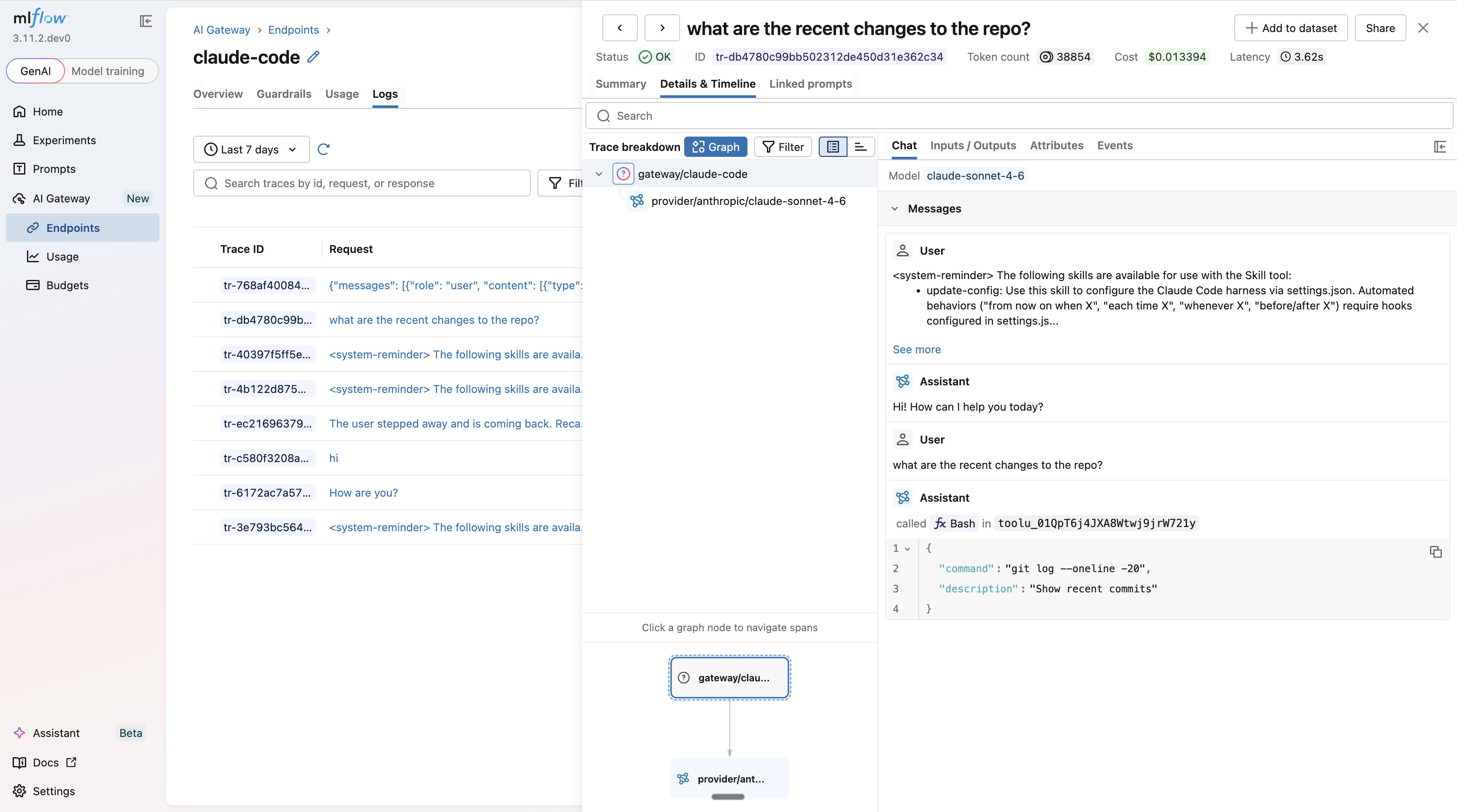

Every conversation is captured as an MLflow trace. Open the Logs tab in the MLflow UI to inspect inputs, outputs, token usage, and latency for every request.

Usage Tracking

Monitor token usage and costs across all Claude Code sessions

Guardrails

Add content policies to all Claude Code requests automatically

Budget Alerts & Limits

Set spending limits globally or per workspace to keep sessions within budget